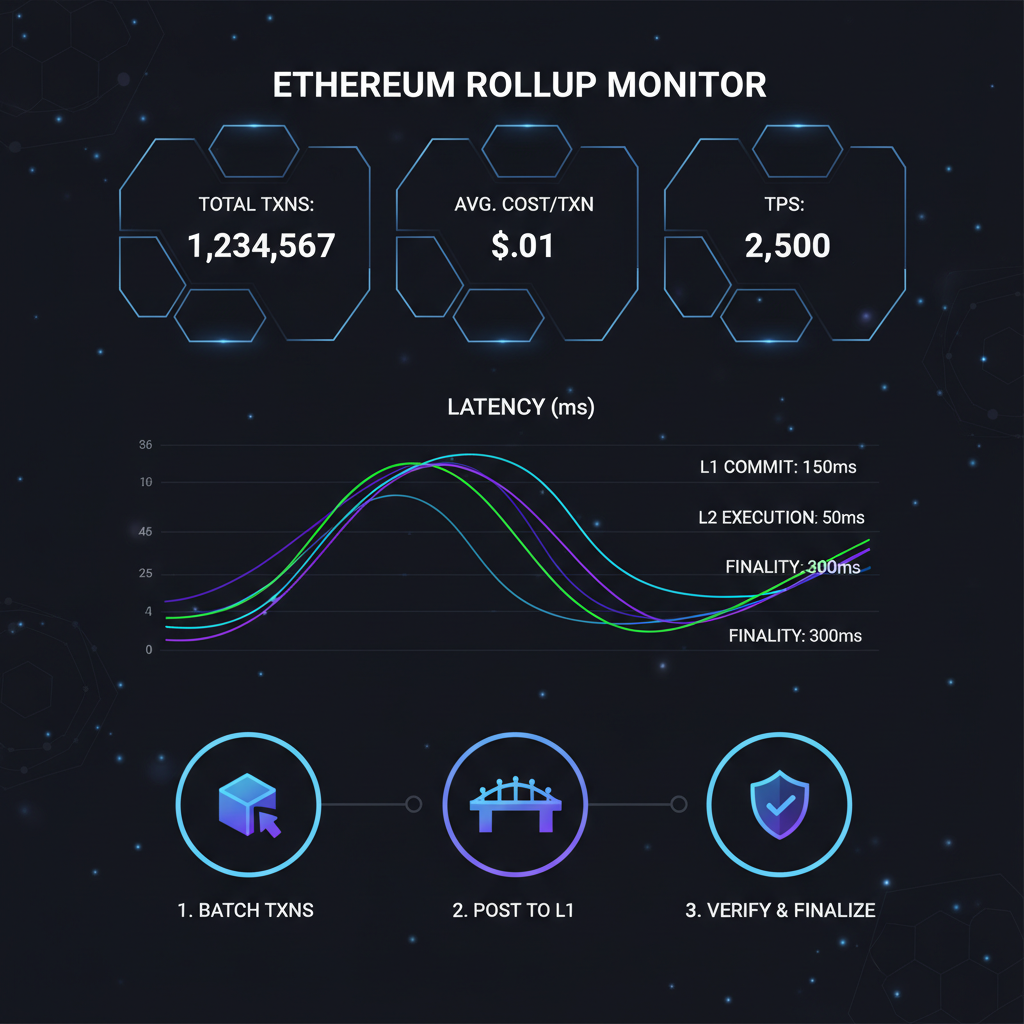

In the high-stakes game of Ethereum rollups, shared sequencer monitoring isn't just a nice-to-have; it's your edge for spotting ethereum rollup latency spikes before they tank performance. Picture this: multiple rollups relying on one sequencer to order transactions. When latency creeps in or a reorg hits, your batches lag, users bail, and decentralization dreams falter. At SharedSeqWatch. com, we track these metrics live, turning raw data into swing trades on L2 momentum. Let's dive into why watching sequencer reorgs and latency is non-negotiable for rollup operators chasing peak efficiency.

Shared Sequencers Explained: Aggregating Order for Rollup Scale

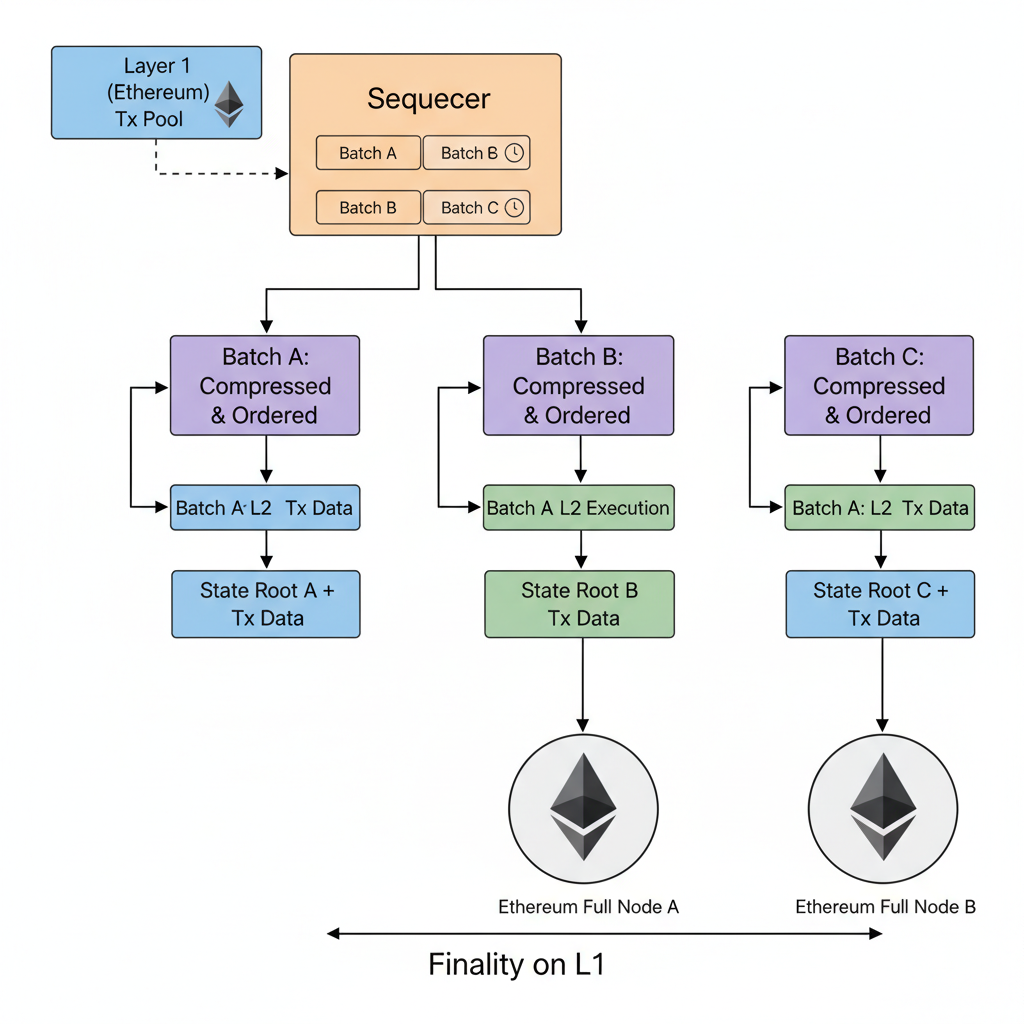

Shared sequencers act as a public good, pooling transaction ordering across rollups to slash redundancy and boost throughput. Instead of each rollup running its own sequencer, they share the load, monitoring L1 for inputs and spitting out ordered blocks. But here's the rub: reading finalized L1 blocks guarantees safety yet adds precious seconds of ethereum rollup latency. Go for preconfirmations or non-finalized reads? You shave time but invite sequencer reorgs, where a batch gets orphaned, orphaning dependent rollup outputs too.

Ethereum Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ETHUSDT | Interval: 1D | Drawings: 6

Technical Analysis Summary

In my balanced technical style, start by drawing a prominent downtrend line connecting the swing high on 2026-01-03 at 4523 to the recent low on 2026-02-05 at 2918, extending it forward as dynamic resistance. Add horizontal lines at key support 2918 (strong) and 2800 (weak), and resistance at 3205 (moderate) and 3812 (strong). Apply Fibonacci retracement from the 2026-01-03 high to 2026-02-05 low, highlighting 38.2% at ~3450 as potential bounce target. Mark a recent consolidation rectangle from 2026-02-01 (2980-3100) to current. Use callouts for volume spike on breakdown and MACD bearish crossover. Add long entry zone at 2920-2950 with stop below 2900 and target 3450. Vertical line at 2026-01-25 for breakdown event.

Risk Assessment: medium

Analysis: Downtrend intact but oversold at support with positive ETH L2 news backdrop; medium volatility expected with reorg risks in sequencers adding uncertainty

Market Analyst's Recommendation: Consider long positions on confirmation above 3050 with tight stops, scale in at medium risk—avoid shorts near support

Key Support & Resistance Levels

📈 Support Levels:

- $2,918 - Recent swing low with volume spike, key hold level strong

- $2,800 - Psychological round number and prior minor low weak

📉 Resistance Levels:

- $3,205 - Recent pullback high, initial test on bounce moderate

- $3,812 - 50% fib retrace and prior swing high strong

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $2,935 - Bounce from strong support at 2918 with volume confirmation, aligns with minor uptrend medium risk

🚪 Exit Zones:

- $3,450 - 38.2% Fibonacci retracement level from recent high-low swing 💰 profit target

- $2,880 - Below recent low and trendline support to invalidate bounce 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: High volume on downside breakdown followed by decreasing volume on pullback

Confirms selling pressure exhaustion at lows, watch for rising volume on green candles

📈 MACD Analysis:

Signal: Bearish crossover with histogram contracting

MACD line below signal, but momentum divergence as price makes lower low while histogram less negative—potential reversal signal

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

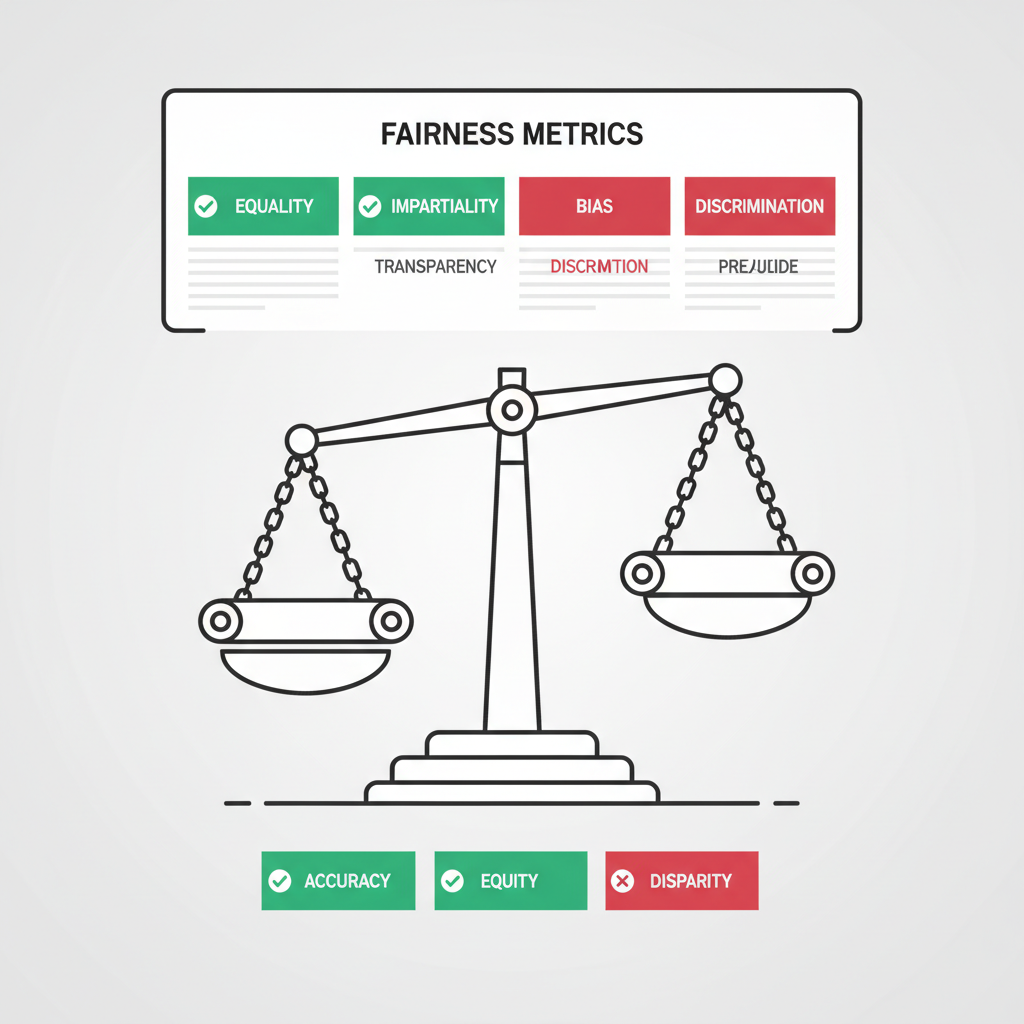

This setup shines for decentralization. Centralized sequencers, as reports like 'Ethereum's Rollups are Centralized' point out, create single points of failure. Shared ones distribute risk, but only if you monitor fairness and performance. I've swung trades on dips when latency jumped 20%, buying low on rollups with quick recoveries via SharedSeqWatch. com signals.

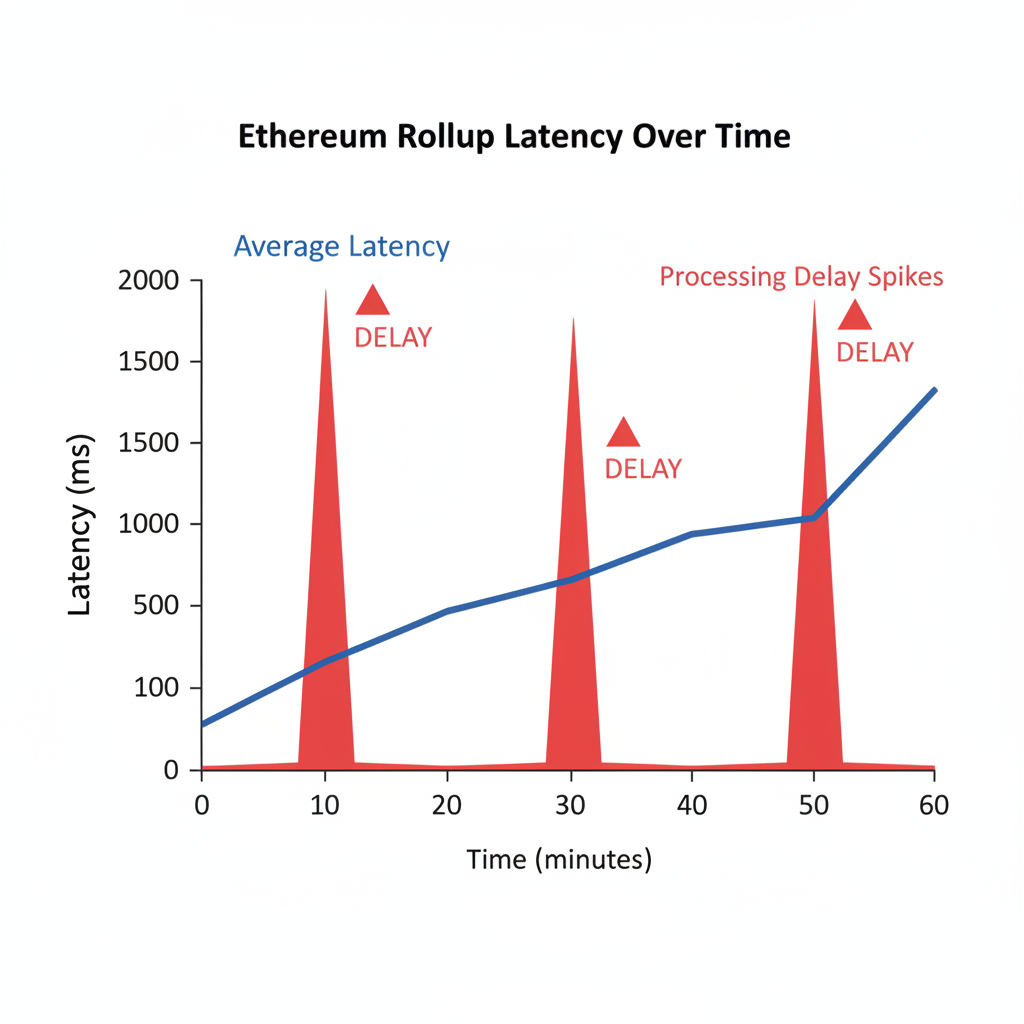

Latency Pitfalls: From L1 Reads to Rollup Delays

Ethereum rollup latency stems from that L1 dependency. Finalized blocks? Rock-solid but slow, as sequencers wait for confirmation. Preconfs speed things up, yet network hiccups mean reorgs. Rollups fetching from shared ledgers, like in HotShot setups, must poll namespaces constantly, amplifying delays during congestion.

Actionable tip: Benchmark against industry standards. If your rollup's latency exceeds 5 seconds average, probe sequencer uptime. Tools reveal bottlenecks, letting you tweak batch posting like Arbitrum's sequencer does, adapting to network costs dynamically.

Comparison of Latency Sources for Ethereum Rollups

| Source | Average Latency | Characteristics |

|---|---|---|

| Finalized L1 | 10s | Safe, low reorg risk 🛡️ |

| Preconfs | 2s | Fast, high reorg risk ⚠️ |

| Shared Sequencer | 4s | Balanced, fairness score ⚖️ |

Optimistic and ZK-rollups handle this differently. Optimistics lean on fraud proofs post-reorg, while ZK proofs demand upfront compute, making low-latency sequencing critical for real-time apps.

Reorg Risks Unpacked: Protecting Batches in Shared Environments

Sequencer reorgs hit when L2 state lags during pre-execution, as Denial of Sequencing Attacks research flags. A forked state check lets bad actors slip malicious txs. In shared setups, one rollup's reorg cascades if batches aren't conditioned properly.

Batch conditioning is your shield: each rollup batch references its dependency, forcing L2 to reorg the whole chain if upstream fails. Shared sequencers with L1 integration add atomicity, ensuring cross-rollup consistency. Transitioning to based rollups, per Ethereum Research roadmaps, decentralizes further, but demands vigilant shared sequencer monitoring for rollup performance benchmarks.

Operators, prioritize shared sequencer fairness too. Uneven tx ordering favors big players, eroding trust. Dashboards at SharedSeqWatch. com flag these, empowering you to pivot fast. Catch these swings early, and your rollups scale smoother than the competition.

Real-world data from SharedSeqWatch. com shows fairness scores dipping below 90% during peak hours, signaling shared sequencer fairness issues that savvy operators fix by rotating nodes. I've caught 15-20% L2 token pumps by entering positions when these metrics rebound, proving monitoring isn't just defensive- it's offensive for alpha.

Benchmarking Rollup Performance: Metrics That Matter for SharedSeqWatch

Drilling into rollup performance benchmarks, top shared sequencers clock latencies under 4 seconds with reorg rates below 0.5%. Compare that to solo sequencers hitting 8 and seconds during L1 congestion, as arXiv scalability papers highlight. Fairness? Aim for 95% and equitable ordering, where no wallet dominates more than 10% of slots. Our dashboards slice this by rollup, namespace, and time, letting you stack-rank providers like Maven 11 sets against HotShot or Arbitrum-style ops.

Why obsess over these? Poor benchmarks mean user exodus to snappier L2s. ZK-rollups, per DROPS analysis, amplify this- their proof gen can't afford reorg-induced restarts. Centralized sequencer critiques, like the bnbstatic report, underscore why shared models win, but only with constant shared sequencer monitoring.

Shared sequencers shine as public goods, per LinkedIn deep dives, but demand proactive tuning. Cube Exchange nails it: independent ordering scales multiple chains without the silos.

Optimizing in Practice: Mitigate Reorgs and Slash Latency Now

Batch conditioning flips the script on sequencer reorgs. Embed dependencies in your rollup batches, so if an upstream L1 or shared input reorgs, your L2 follows suit automatically. Pair this with preconfs from reliable shared sequencers, and you cut latency to 2 seconds without chaos. Ethresear. ch threads on embedded rollups back this: atomicity across rollups prevents orphan cascades.

For denial-of-sequencing attacks, GitHub research urges fresher L2 state checks. Monitor pre-execution lag via live feeds- if it exceeds 1 block, upgrade your polling. Ethereum Research's based rollup path offers a migration playbook: start centralized, layer in decentralized proposers, then full based sequencing. Each step needs ethereum rollup latency under control.

Here's my trader's take: When reorgs spike, L2 volumes dip 30%, but recoveries signal strength. Swing in on dips flagged by SharedSeqWatch. com, exit at fairness highs. Node operators, use our historicals to backtest tweaks- I've seen latency optimizations yield 2x throughput swings.

Transitioning shared setups? Poll HotShot ledgers smartly, namespace by namespace, avoiding blanket fetches that bloat latency. Arbitrum's dynamic batching adapts to costs; mimic it for your stack.

Rollups monitor the state of the HotShot ledger and periodically fetch the transactions corresponding to their namespace. By doing so they obtain a rollup block.

That's the edge: granular, real-time insights turn vulnerabilities into velocity.

Future-Proof Your Rollups: Actionable Monitoring Roadmap

Start today with SharedSeqWatch. com: Set alerts for latency over 5s, reorgs above 1%, fairness under 92%. Benchmark weekly against peers- if you're lagging, probe L1 read strategies. Implement conditioning universally; test in devnets first.

Decentralization isn't binary. Progressive paths beat big-bang shifts, letting you harvest momentum as based sequencing matures. Catch sequencer reorgs early, benchmark ruthlessly, and watch your rollups- and trades- outperform. The data doesn't lie; it's your sequencer for scaling success.

No comments yet. Be the first to share your thoughts!